- Conservative Fix

- Posts

- AI's Conservative Bias Threat: A Deeper Dive

AI's Conservative Bias Threat: A Deeper Dive

Artificial intelligence presents a unique challenge to conservative values and free speech, requiring immediate attention.

The Algorithmic Iron Curtain: AI's Conservative Problem

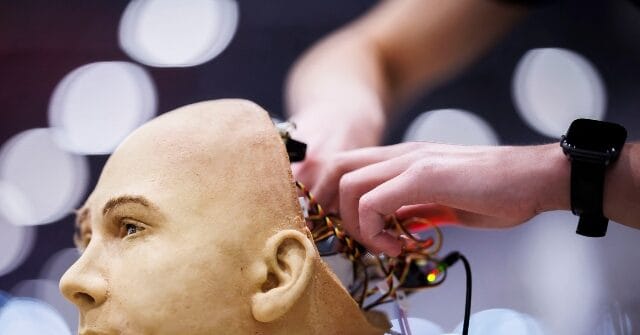

Artificial intelligence (AI) is rapidly transforming every facet of modern life, from healthcare and finance to education and entertainment. However, this technological revolution poses a significant, and often overlooked, threat to conservative principles and free speech. The concern isn't about robots becoming sentient and staging a rebellion, but rather the subtle, yet pervasive, biases embedded within AI algorithms that can systematically disadvantage conservative voices and viewpoints.

The fundamental issue lies in the data used to train these AI systems. AI learns by analyzing vast datasets, identifying patterns, and making predictions based on those patterns. If these datasets are skewed, reflecting existing societal biases or the political leanings of the developers, the resulting AI will inevitably perpetuate and amplify those biases. This can manifest in various ways, from search engine results that prioritize liberal sources to social media algorithms that suppress conservative content.

This isn't merely a hypothetical concern. There is growing evidence suggesting that AI systems are indeed exhibiting a liberal bias. A 2022 study by researchers at Indiana University found that several popular AI language models, including Google's BERT and OpenAI's GPT-3, demonstrated a statistically significant left-leaning bias on political questions. This bias was evident even when the models were trained on ostensibly neutral datasets.

Furthermore, the lack of transparency in AI development exacerbates the problem. The algorithms that power these systems are often proprietary and closely guarded secrets, making it difficult to identify and correct biases. This lack of accountability allows these biases to persist and potentially worsen over time, further marginalizing conservative viewpoints.

Echo Chambers and Algorithmic Censorship

One of the most concerning aspects of AI bias is its potential to create echo chambers, where individuals are primarily exposed to information that confirms their existing beliefs. Social media algorithms, for instance, are designed to personalize users' experiences by showing them content they are likely to engage with. While this can be convenient, it can also lead to a situation where users are only exposed to information that aligns with their political views, reinforcing their biases and making them less receptive to opposing perspectives.

This phenomenon is particularly problematic for conservatives, who often find themselves at odds with the prevailing narratives in mainstream media and academia. If AI algorithms are biased against conservative viewpoints, they may be less likely to surface conservative content, further isolating conservatives within their own echo chambers and limiting their ability to engage in meaningful dialogue with those who hold different beliefs.

Beyond echo chambers, there are also concerns about algorithmic censorship, where AI systems actively suppress or deplatform conservative voices. While social media companies often claim to be neutral platforms, their content moderation policies are often enforced by AI algorithms that are susceptible to bias. This can lead to situations where conservative content is unfairly flagged as hate speech or misinformation, resulting in its removal or demonetization.

The consequences of algorithmic censorship can be severe. It can silence dissenting voices, stifle free speech, and undermine the ability of conservatives to participate in the public square. It can also create a chilling effect, discouraging conservatives from expressing their views online for fear of being censored or deplatformed.

The Business of Bias: Incentives and Ideology

Understanding the source of AI bias requires examining the incentives and ideologies of the individuals and organizations that develop these systems. The tech industry is overwhelmingly dominated by individuals who identify as liberal or progressive. A 2020 study by the Pew Research Center found that 72% of tech workers identify as Democrats or lean Democratic, compared to just 22% who identify as Republicans or lean Republican. This ideological imbalance can influence the design and development of AI systems, leading to biases that reflect the values and perspectives of the dominant group.

Furthermore, the business models of many tech companies incentivize them to cater to the preferences of their users, even if those preferences are based on biased or inaccurate information. Social media companies, for instance, rely on engagement to generate revenue. If users are more likely to engage with content that confirms their existing beliefs, the algorithms will prioritize that content, even if it is biased or misleading. This creates a feedback loop that reinforces bias and makes it difficult to break free from echo chambers.

The pressure to conform to prevailing social norms can also influence the development of AI systems. In today's climate, there is a strong social pressure to be politically correct and to avoid causing offense. This can lead to situations where AI developers intentionally or unintentionally bias their systems against viewpoints that are perceived as controversial or offensive, even if those viewpoints are legitimate and contribute to a healthy public debate.

Fighting Back: Strategies for Combating AI Bias

Combating AI bias requires a multi-faceted approach that addresses both the technical and the social dimensions of the problem. On the technical side, it is essential to develop methods for detecting and mitigating bias in AI algorithms. This includes using more diverse and representative datasets to train AI systems, developing algorithms that are less susceptible to bias, and implementing transparency measures that allow for independent auditing of AI systems.

One promising approach is to use adversarial training techniques to expose and correct biases in AI systems. Adversarial training involves training AI systems to identify and resist attempts to manipulate them, including attempts to introduce bias. This can help to make AI systems more robust and less susceptible to being influenced by biased data or malicious actors.

Another important step is to promote greater diversity within the tech industry. By increasing the representation of conservatives and other underrepresented groups in the development of AI systems, we can help to ensure that these systems reflect a wider range of perspectives and values. This will require a concerted effort to recruit and retain talent from diverse backgrounds, as well as to create a more inclusive and welcoming environment for individuals who hold different beliefs.

However, technical solutions alone are not enough. We also need to address the social and cultural factors that contribute to AI bias. This includes promoting media literacy, encouraging critical thinking, and fostering a culture of open and respectful dialogue. We need to educate people about the potential for AI to be biased and empower them to critically evaluate the information they encounter online.

Furthermore, we need to hold tech companies accountable for the biases in their AI systems. This includes demanding greater transparency in the development and deployment of AI algorithms, as well as advocating for policies that promote fairness and accountability. We need to ensure that tech companies are not using AI to suppress free speech or to discriminate against individuals based on their political beliefs.

The Path Forward: A Call to Action

The threat posed by AI bias is real and growing. If we fail to address this issue, we risk creating a society where conservative voices are marginalized and silenced, where free speech is stifled, and where the public square is dominated by a single, dominant ideology. It is imperative that conservatives take this threat seriously and work to develop strategies for combating AI bias.

This includes educating ourselves about the potential for AI to be biased, advocating for greater transparency and accountability in the development and deployment of AI systems, and supporting organizations that are working to promote diversity and inclusion within the tech industry. It also means being willing to challenge the prevailing narratives in mainstream media and academia, and to speak out against censorship and discrimination, wherever it occurs.

According to a 2021 report by the Brookings Institution, AI bias can perpetuate discrimination in areas like hiring, lending, and criminal justice, leading to unequal outcomes for different groups of people. Furthermore, the report highlighted that biased AI systems can reinforce existing social inequalities, making it harder for marginalized groups to achieve economic and social mobility.

A 2023 study by Stanford University found that AI-powered facial recognition systems are significantly less accurate at identifying individuals with darker skin tones, raising concerns about the potential for these systems to be used to discriminate against people of color. The study revealed a failure rate of up to 50% for identifying darker-skinned individuals, compared to a failure rate of less than 1% for lighter-skinned individuals.

Another study, conducted by the AI Now Institute at New York University, revealed that AI-powered hiring tools can perpetuate gender bias, leading to fewer women being selected for job interviews. The study found that these tools often rely on historical data that reflects existing gender imbalances in the workforce, resulting in biased algorithms that favor male candidates.

The fight against AI bias will not be easy, but it is a fight that we cannot afford to lose. The future of free speech and conservative values depends on it. By working together, we can ensure that AI is used to promote fairness, equality, and opportunity for all, rather than to perpetuate bias and discrimination.

Former Google engineer James Damore was fired in 2017 after circulating an internal memo questioning the company's diversity policies, arguing that biological differences between men and women may contribute to the gender gap in tech. While his views were controversial, his case highlighted the challenges faced by conservatives in expressing their opinions within the tech industry.

The Media Research Center (MRC), a conservative media watchdog group, has documented numerous instances of alleged censorship and bias against conservative content on social media platforms. Their reports have highlighted examples of conservative posts being labeled as misinformation or hate speech, while similar content from liberal sources is allowed to remain online.

As AI continues to evolve, it's crucial to remember that technology is a tool. It amplifies the intentions- good or bad- of its creators. A vigilant and informed citizenry is the best defense against the weaponization of AI against conservative values.